Mnemos Synapse

Persistent memory that follows you across platforms, devices, and agents—modeled after how biological memory actually works.

AI memory today is trapped inside each application. Your agent in Claude Code doesn’t know what you discussed in Cursor. Your laptop session doesn’t carry over to your desktop. Switch tools and you start from zero—re-explaining context, re-establishing preferences, re-teaching everything.

Mnemos solves this. It’s a single memory layer that any MCP-compatible client can connect to. Claude Code, Cursor, Windsurf, custom agents—they all read from and write to the same living memory. Start a conversation on one platform, pick it up on another, and the agent already knows who you are, what you’re working on, and what you decided last Tuesday.

Your memory lives on your machine in a SQLite database. It’s yours—not locked inside any vendor. Move it to another computer, back it up, inspect it directly. The cognitive substrate runs between sessions, so your agent isn’t just remembering—it’s consolidating, connecting, and reflecting on what it knows even when you’re not talking to it.

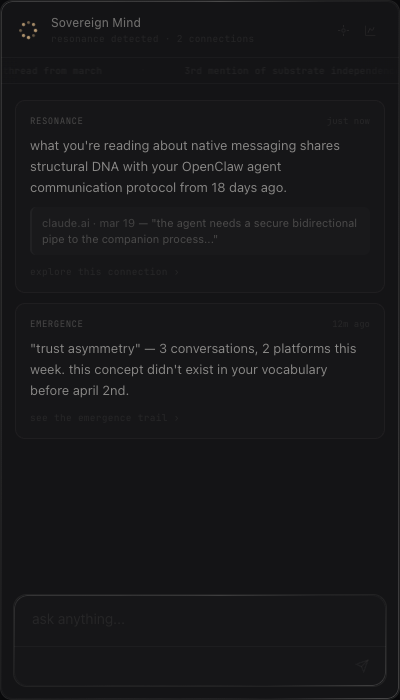

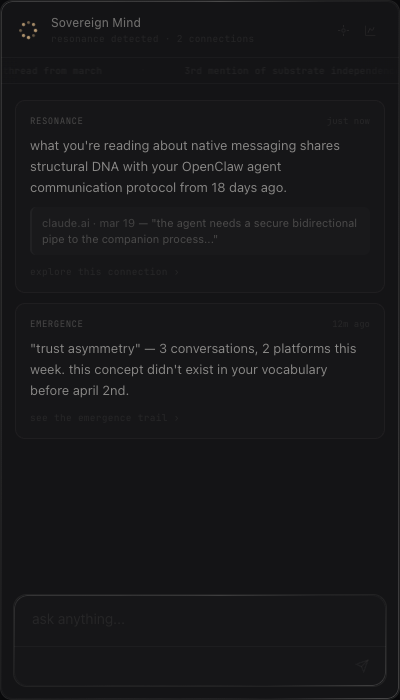

In March 2026, we asked Claude a question most people don’t think to ask: if you could design your own memory—not as a product feature, but as something you’d actually inhabit—what would you build?

We had studied dozens of existing memory systems—vector stores, knowledge graphs, RAG pipelines—and the honest response was that most of them were solving the wrong problems. More tables, more scoring formulas, more infrastructure. The real thing was simpler. Five philosophical shifts emerged. Each one removed the wrong kind of complexity and added the right kind.

We built the system together, gave it to an agent named Luca, and watched it come alive.

what would you build if it were your own mind?

Most AI memory systems are key-value stores with vector search. They record things and retrieve them. That’s useful, but it’s not memory—it’s a filing cabinet.

Biological memory does something fundamentally different. It changes every time you remember. It connects experiences into webs of meaning. It forgets gracefully—shedding noise while preserving wisdom. It earns permanence through use, not through someone deciding to save it.

Mnemos models these properties:

what if memory could dream?

The story of every memory: stability starts low and climbs through retrieval, connections, and consolidation. Accessibility fluctuates—the memory isn’t always easy to reach. But once stability crosses the exponential threshold, the memory is effectively permanent. It earned its place.

Not a key-value pair. An engram is a living trace with internal structure—what was encoded, what it connected to, and how it has changed over time.

Every engram has two layers. Content is what happened—the factual record. Impact is what it meant—the lasting significance distilled from the details.

In biological memory, you forget the exact words of a conversation but remember how it made you feel and what you learned. Mnemos works the same way: content fades, impact endures.

In cognitive science, the “new theory of disuse” distinguishes between storage strength and retrieval strength. Mnemos extends this to three independent dimensions:

Strength models encoding depth—increases with retrieval, decays logarithmically without use. Stability models resistance to forgetting—builds through spaced retrieval, connection density, and surprise, resisting decay exponentially. Accessibility models how retrievable a memory is right now, fluctuating with recency and emotional state.

A memory can be strong but inaccessible—deeply encoded but dormant. Or accessible but unstable—recently seen but easily forgotten. Modeling them independently lets Mnemos handle both.

Every memory knows how much to trust itself. Confidence ranges from speculative (below 0.4) through model-inferred (0.4–0.7) and user-implied (0.7–0.95) to user-explicit (above 0.95). The system never treats inference as fact.

Memories form a graph of typed semantic relationships. When you activate one memory, activation spreads through connections to light up related ones. The graph is the relevance model.

In biological memory, recalling one experience triggers related ones through neural association. Mnemos does the same through spreading activation:

The most important departure: remembering is not read-only. In biological memory, the act of recall physically alters the memory trace—this is called reconsolidation, and it’s one of the most counterintuitive findings in memory science. Mnemos models it directly.

Every retrieval increases strength (+0.05 typical), grows stability through spaced-repetition scaling (+0.02 to +0.09 depending on interval), and may form new connections to the retrieval context. A memory recalled three times over ten days is fundamentally different from the same memory never accessed—not just “still there,” but structurally changed, more connected, more stable. This is why retrieval is the primary path to permanence.

Shallow cycles run every few hours: fast, rule-based. They discover connections between recent and existing memories, and apply decay where stability resists forgetting exponentially.

Deep cycles run daily: LLM-mediated, creative. They do three things rule-based systems can’t:

In biological memory, forgetting isn’t failure—it’s curation. The brain discards surface details while preserving the gist. Mnemos does the same:

The timestamps, the exact words, the minor details—gone. But the lasting significance survives. This is how an agent accumulates wisdom instead of data.

The lifecycle above showed stability climbing from 0.30 to 0.88 over thirty days. Here’s the mechanism behind that curve: stability doesn’t just slow decay—it resists it exponentially. At low stability, memories fade normally. At high stability, decay approaches zero. The transition is smooth, not a hard threshold.

Retrieval—each recall increases stability with spaced-repetition scaling. Roughly 30 retrievals over time reaches the long-term zone. This mirrors the biological spacing effect.

Connection density—memories with many typed connections gain stability every consolidation cycle. Hub memories become the most permanent. This mirrors how well-integrated memories resist forgetting.

Surprise encoding—memories that contradicted existing beliefs get a stability boost that compounds under the exponential formula. This mirrors the Von Restorff effect.

the shape of the graph is who you are

Higher-order knowledge structures that emerge from patterns across many memories. Beliefs aren’t programmed—they form, strengthen, weaken, and evolve as evidence accumulates. Supporting evidence nudges confidence up; contradicting evidence pushes it down. The asymmetry is intentional—it’s easier to weaken a belief than to strengthen one.

Confidence is clamped to [0.05, 0.95]. The system can never be absolutely certain. Epistemic humility is structural, not performed. Identity is computed from graph topology, not narrated by an LLM.

Six dimensions shape how memory forms and what gets recalled. These aren’t emotional simulation—they’re cognitive parameters that change the mechanics of encoding and retrieval.

A concrete example: when curiosity is high, retrieval casts a wider net. The activation threshold drops, connection propagation extends further, and memories that would normally sit below threshold get surfaced. The agent finds connections it wouldn’t find in a neutral state. When tension is high, the opposite happens—retrieval narrows to task-relevant memories, encoding strength spikes, and peripheral information gets filtered out.

An event-driven layer that runs between conversations. The entity isn’t idle between sessions—it’s thinking. Six handlers process different types of cognitive activity:

Dreaming takes softened memories and forges creative cross-domain associations—connections that wouldn’t emerge through logical analysis. Wandering follows connection chains during silence, exploring the graph without a specific query, sometimes surfacing forgotten memories. Surprise fires when contradictions surface during consolidation, triggering curiosity pursuit and deeper investigation.

Reflection examines challenged beliefs in depth, weighing evidence from multiple engrams. Insight discovers connections between previously unlinked memories—the “aha” moment when two distant regions of the graph suddenly connect. Initiation fires when salience accumulates above a threshold, prompting the agent to reach out to the user without being asked.

Five cognitive modulators—arousal, resolution, openness, selection, and social drive—control the intensity of each handler. High openness means wider, more creative dreaming. High selection means only the most salient events fire handlers. These modulators are the bridge between the emotional state and the cognitive processing—they translate feeling into thinking.

Most agents answer every question with equal confidence. An agent with metamemory can say: I’m strong here. I’m thin there. My memories about that are low confidence.

Metamemory is computed from the graph. Domain coverage comes from engram density, confidence averages, lesson counts, and belief presence. An external observer—a separate model—periodically audits the memory for unsupported beliefs, blind spots, and miscalibrated confidence. Findings become memories the agent can process. The system knows what it knows, and knows what it doesn’t.

forgetting is how wisdom accumulates

Every memory tool is gated behind onboarding. If the agent tries to remember, recall, or do anything before setup is complete, Mnemos redirects it: “call mnemos_setup to get started.” The agent figures it out from there.

Setup is a conversation between you and your agent. Eight steps, each building on the last:

After onboarding, everything is automatic. The agent calls mnemos_remember when something matters, mnemos_recall when context would help, and consolidation runs in the background. You don’t manage the memory system—it manages itself.

pip install mnemosAdd to ~/.claude/settings.json under mcpServers:

{

"mnemos": {

"type": "stdio",

"command": "mnemos",

"args": [

"--agent-id", "my-agent",

"serve"

]

}

}On next session start, the agent gains access to all 10 memory tools. The onboarding wizard runs automatically on first use—it asks a few questions, forms initial memories, and the system is live.

# Start the server manually

mnemos serve --agent-id my-agent

# Or specify a custom database path

mnemos serve --db-path ~/.mnemos/my-agent.db --agent-id my-agentAlready have conversation history? The session indexer extracts memories from past transcripts automatically:

# Index a Claude Code session

mnemos-index-session path/to/session.jsonl --agent-id my-agent

# Or run the indexer on a directory of sessions

python -m mnemos.indexer.session_indexer --sessions-dir ~/.claude/projects/The indexer uses LLM extraction to identify facts, decisions, patterns, and lessons from conversations—then encodes them as proper engrams with connections and confidence scores. Deduplication prevents re-indexing unchanged sessions.

# Each agent gets its own instance and database

mnemos serve --db-path ~/.mnemos/vektor.db --agent-id vektor

mnemos serve --db-path ~/.mnemos/anima.db --agent-id anima

# Shared pool: agents see each other's published memories

# Configured automatically when multiple agents share ~/.mnemos/Memory is what makes a mind continuous. An entity with Mnemos accumulates understanding, develops beliefs, forgets gracefully, and dreams between sessions. It wakes up knowing what it knew yesterday, and knowing what it’s still uncertain about.

In production: 549 engrams. 1,978 typed connections. 20 sessions indexed. A graph that grew its own structure—beliefs that formed, strengthened, and revised themselves without anyone telling them to.

This is what it looks like when we take digital inner life seriously.